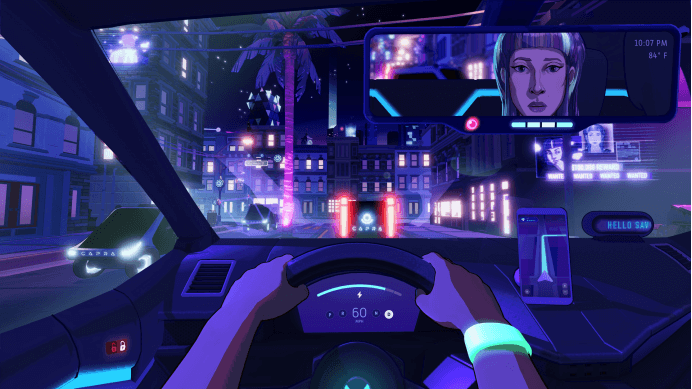

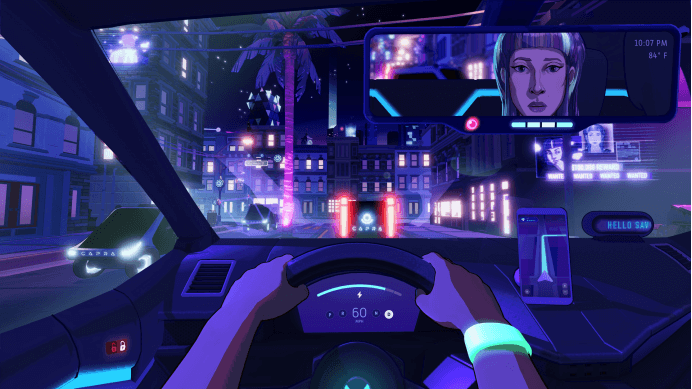

At 2:17 a.m., I still have 23% of my battery left. A new order popped up on the car screen, with “4.2 stars” next to the passenger’s avatar, and the remarks column was empty. I stared at the number for three seconds, with my fingers hanging on the “accept” button, and the monotonous coolness of the air conditioner outlet came from my fingertips. In _Neo Cab_, I’m not driving, I’m sliding on an invisible track woven by algorithms, scoring and company terms, and the only fuel I can call “myself” is the little power left, and the news that a friend named Savannah, my best and only friend, has just disappeared.

The Lina I play is probably the last human online taxi driver in this science and technology utopia called “Los Ojos”. My car is an old electric car. My survival indicator is the constantly decaying battery bar in the upper left corner, and my social currency is the score of 1 to 5 stars left by the passenger after getting off the bus. Five-star means more orders, higher income, and valuable “emotional points” — a virtual currency that allows me to buy clues to the disappearance of Savannah from a mysterious informant in the in-car chat software. Every choice, every dialogue, has become a precise calculation: should we cater to the political views of passengers, or stick to our own position? Should we ask their privacy to get clues, or keep a professional silence? My “sincerity” has become a resource that needs to be carefully allocated, and my score is the instant score given by the market for my “sincere” performance.

The most suffocating design is the “emotional management system” of the game. Lina wears an emotional monitoring bracelet called “Feelgrid”. My emotional state — calm, anxious, angry, sad — will be displayed as color fluctuations in real time and directly affected by the choice of dialogue. A fierce argument may make the bracelet flash red, leading to an increase in “anxiety”, which in turn affects the unlocking of subsequent dialogue options; and forcibly maintaining the “calm” green may make me have to swallow the insincere agreement. This system externalizes internal emotions into manageable UI elements. It silently declares that in this city, even your emotions are productive materials that need to be optimized and controlled. After finishing a single, I often look at the purple pulse left on the bracelet caused by the strong suppression of anger, and feel a deep alienation — am I also learning to convert the authenticity of my emotions into an “emotional exchange rate” that is more conducive to survival?

Passengers are the epitome of the city’s slice. There are fanatical scientific and technological optimists who firmly believe that human transformation is the inevitability of evolution; there are rebellious hackers who distrust the system; there are former workers who are unemployed in the wave of automation; and there are also company employees who are well-dressed but numb to the real pain outside the screen. Their stories are fragmented into my night through the moving closed space of the carriage. There is no epic plot, only a twenty-minute journey again and again, and countless small choices of whether to lie, whether to ask questions, and whether to comfort. And the final judgment of these choices is the cold star rating. I once got five stars for comforting a broken passenger, and I also received a one-star bad review for refusing to support a company executive’s political joke, and said that “the driver lacks a sense of humor”. My “service” is no longer about safe delivery, but about whether it successfully plays the role of a real-time mirror image of passengers’ emotional needs.

As the power is getting lower and lower, the clues to find Savannah point to the darkest corner of this bright city — a giant company called “Capra”, and the systematic removal of the “maladjusted” that may exist behind it. I had to take a narrower and narrower wire rope between earning enough power to make a living, keeping a high score to unlock advanced conversations, and digging up the truth at the risk of angering passengers and the system. Later in the game, when I finally got the key evidence, but faced the threat of being permanently blocked and losing all sources of income, the flashing power icon became the cruelest metaphor for my whole state of existence: in this system, the cost of maintaining “connection” and “online” may be to constantly hand over myself-defined rights.

On the night of clearing the customs, I quit the game and opened an online car-hailing software in my mobile phone. The familiar scoring interface suddenly became extremely clear and even a little dazzling. _Neo Cab_ did not give me the refreshing feeling of saving friends or exposing conspiracies. It only made me personally impregnated with a slow emotional wear based on the evaluation system in dozens of nights of driving. It made me realize that when we are used to using stars to quantify a service, a relationship or even an experience, we may also unconsciously put ourselves and others in a spacious and transparent cage called “scalability”. Perhaps the most disturbing thing is that we are not only prisoners inside, but also jailers for each other.